Hybrid AI: Diverse models, reduced risks

By late 2022, when ChatGPT first emerged, many business leaders entered a phase of enthusiasm. Here, finally, was a technology that could answer any question, generate any content, and handle virtually any request. However, less than two years later, real-world production deployments have painted a more nuanced picture: standalone GenAI excels in experimentation, but becomes fragile when placed at the core of mission-critical business processes – where a single error is not merely a technical issue, but a potential legal, financial, or even life-threatening risk.

This is why Hybrid AI, an architecture that integrates multiple layers of models and data within a unified system, is rapidly becoming the strategic choice for organizations seeking to move beyond the proof-of-concept stage. These challenges and architectural considerations were also highlighted at the Lenovo x FPT TechDay 2026 event.

Why is a single model not enough?

To understand Hybrid AI, it is essential to start with the limitations of Generative AI (GenAI) in real enterprise environments. Large language models (LLMs) are primarily trained on public data – such as the internet, books, news, and open-source code. While this gives them impressive language capabilities, it also creates a critical gap: they lack awareness of your bank’s credit approval workflows, your hospital’s internal clinical protocols, or the fact that Product X had a known security vulnerability last quarter.

The direct consequence is the phenomenon of “hallucination” – where the model confidently generates incorrect or fabricated information that does not align with real-world business data. In highly regulated environments such as finance or healthcare, this is a critical failure point. A lending decision based on flawed risk assessment, or a treatment recommendation that deviates from clinical guidelines, is unacceptable, even if the error originates from AI.

In addition, there is the issue of explainability. Regulatory bodies in finance (e.g., Basel, PCI-DSS) and healthcare (e.g., HIPAA) require not only correct decisions, but also the ability to demonstrate why a decision was made, based on which data and rules. A “black-box” model, no matter how accurate, cannot meet this requirement.

What is Hybrid AI – and why it is more than just RAG

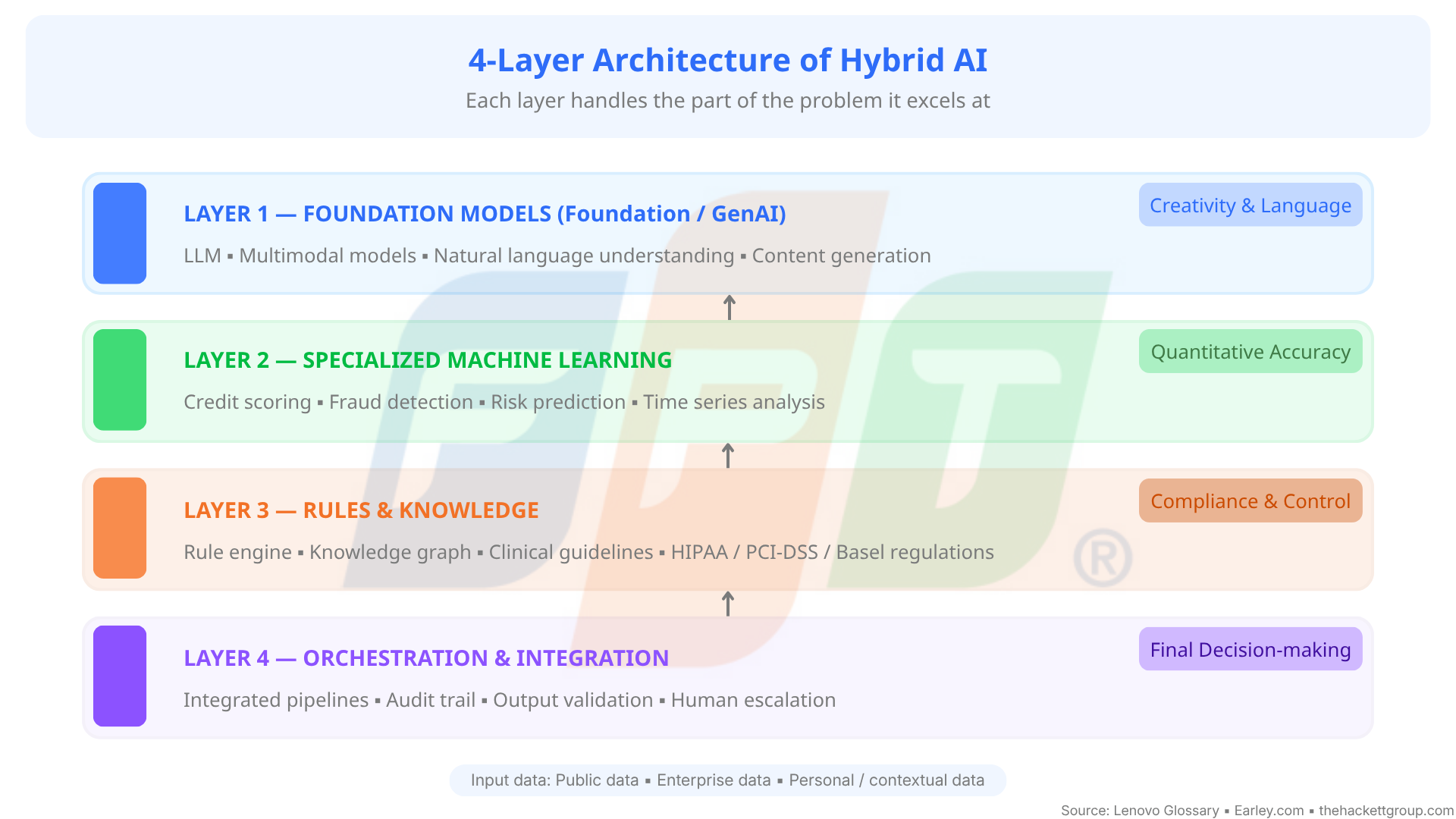

At its core, Hybrid AI is an intentional architecture that combines multiple AI techniques: statistical machine learning, deep learning, rule-based systems, symbolic AI, knowledge graphs, and GenAI, within a coordinated pipeline. No single component “does everything”; each layer addresses the part of the problem it is best suited for.

A simple analogy: if GenAI is a smart new employee, quick to understand language and articulate ideas, then Hybrid AI is the entire enterprise system: including that employee, along with risk control, legal, operational databases, and internal approval workflows. The employee proposes; the system validates, adjusts, and makes the final decision.

It is important to distinguish Hybrid AI from Retrieval-Augmented Generation (RAG), a widely adopted approach in 2023-2024. RAG enhances GenAI by retrieving relevant documents before generating responses. While this is a meaningful improvement, it remains limited: it lacks quantitative ML prediction layers, rule engines for output validation, and knowledge graphs for complex relational reasoning. Hybrid AI expands this into a truly multi-layered architecture.

A typical enterprise Hybrid AI system consists of four coordinated layers: The foundation model layer (GenAI/LLMs) for language understanding and content generation; The specialized machine learning layer (e.g., risk scoring, anomaly detection) for high-precision quantitative analysis; The rules and knowledge layer (rule engines, knowledge graphs, clinical guidelines, regulatory frameworks) to validate and anchor outputs in business reality; The orchestration layer to coordinate and govern the entire decision flow

Financial use case: When “black boxes” are not acceptable

One of the most prominent applications of Hybrid AI is in banking – where fraud detection requires both millisecond-level speed and auditability.

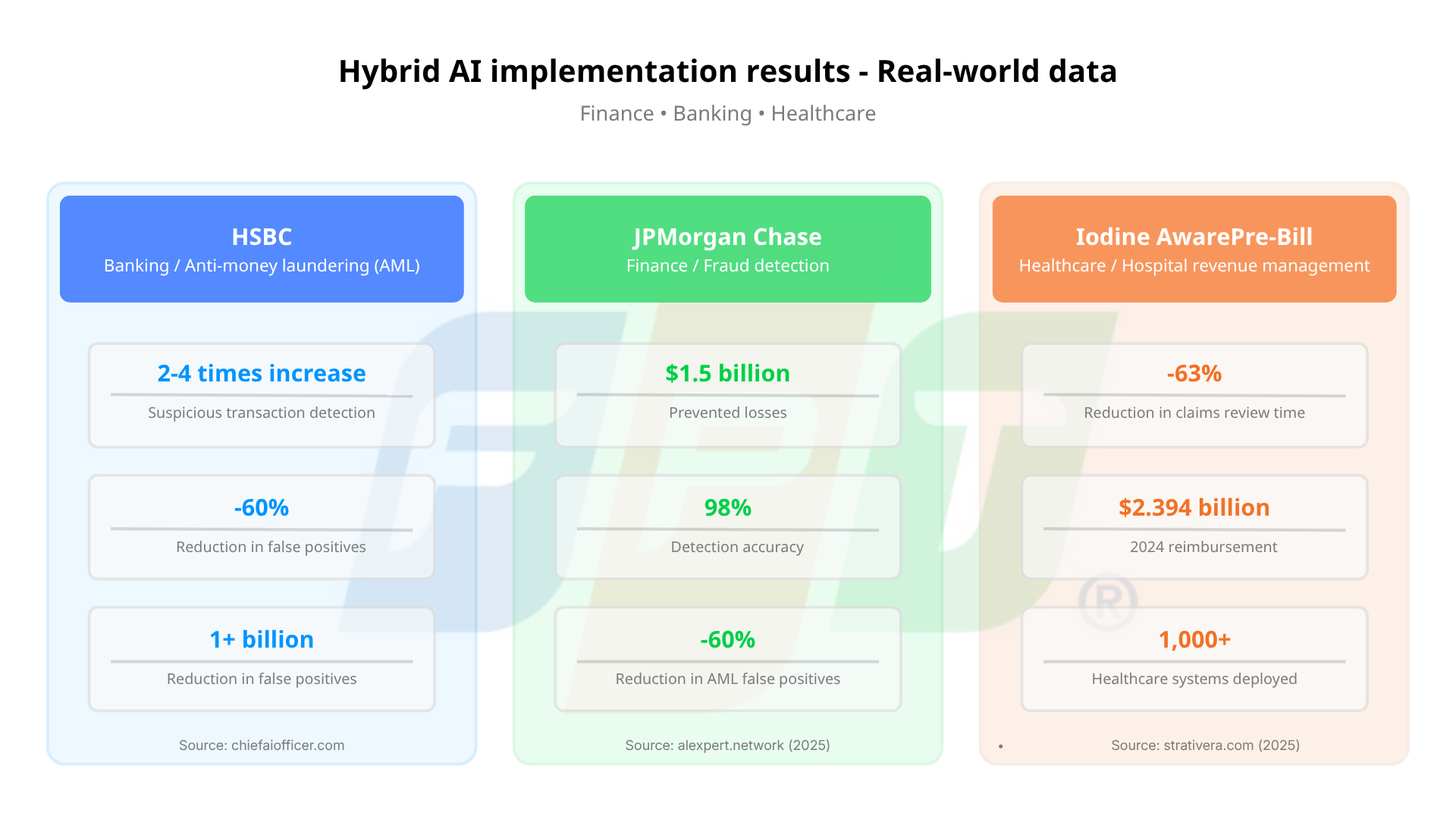

HSBC has deployed AI systems processing over one billion transactions per month, detecting suspicious activities 2-4 times more effectively than traditional methods while reducing false positives by 60%. The key is not just performance metrics, it is the underlying architecture. The system combines behavioral machine learning models with hard-coded AML (Anti-Money Laundering) rules within a pipeline that generates full audit trails for regulators.

Similarly, JPMorgan Chase, with over 450 AI use cases and a $17 billion technology budget in 2024, has implemented fraud detection systems preventing $1.5 billion in losses with 98% accuracy. In AML monitoring, AI reduces false positives by 60% by identifying suspicious patterns across millions of daily transactions.

These figures are impressive, but more importantly, they highlight how hybrid architectures allow banks to leverage ML for pattern recognition while maintaining transparency through verifiable rule layers, something a single model cannot achieve.

Healthcare use case: When GenAI needs “clinical supervision”

In healthcare, the stakes are even higher, as AI errors can directly impact patient safety. This sector is also among the fastest-growing adopters of Hybrid AI.

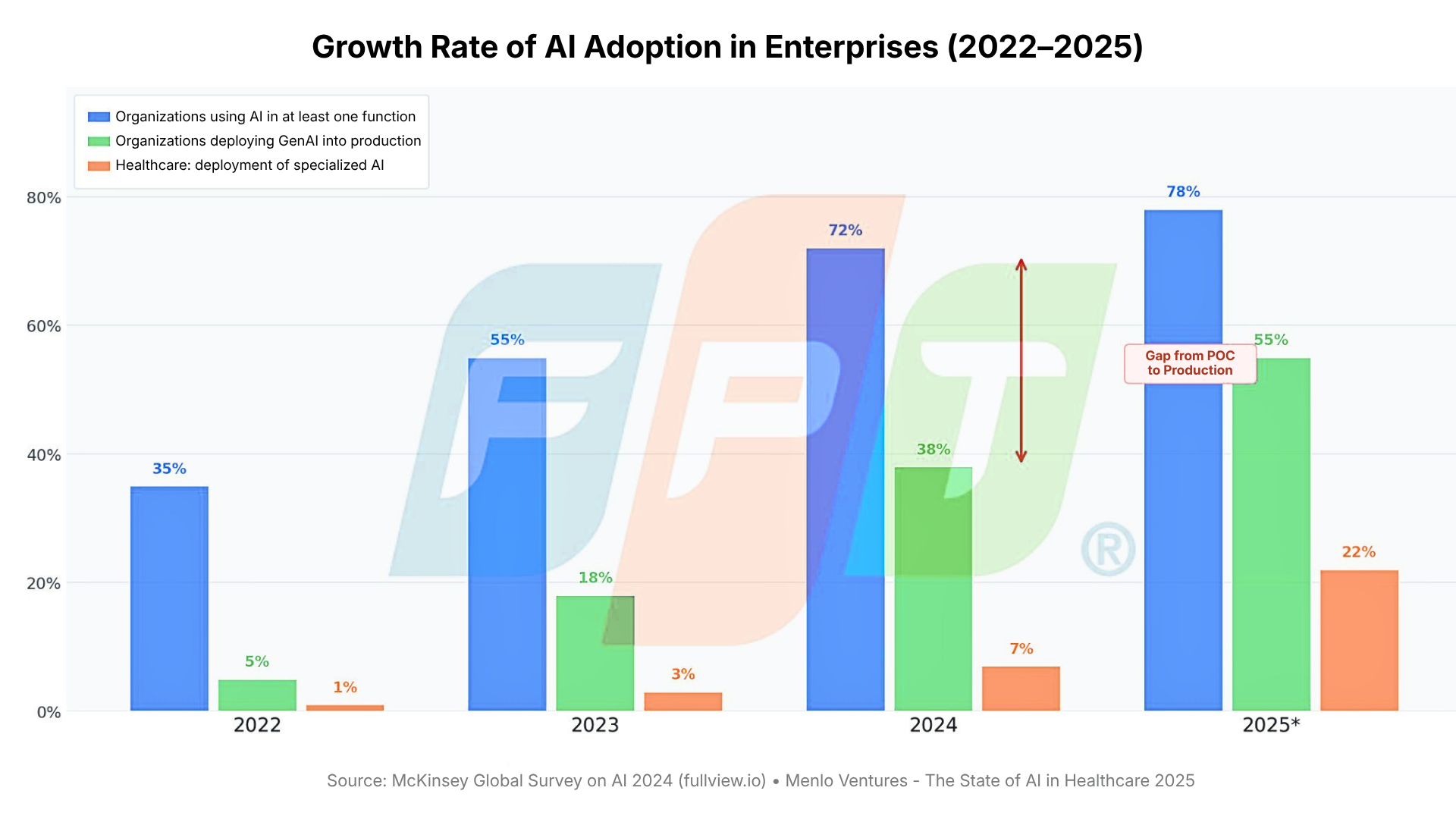

According to Menlo Ventures, 22% of healthcare organizations have deployed specialized AI tools – 7x growth compared to 2024 and 10x compared to 2023. This acceleration reflects the maturity of Hybrid AI architectures that enable safe deployment in highly regulated clinical environments.

Consider Revenue Cycle Management systems. The Iodine AwarePre-Bill platform has reduced claim review time by 63%, supporting over $2.394 billion in reimbursements across 1,000+ healthcare systems in 2024. This is not a simple GenAI chatbot reading patient records, it integrates NLP for clinical data extraction, ML models for ICD code prediction, and rule engines to ensure compliance with insurance regulations, all within a HIPAA-compliant pipeline.

Critically, in healthcare, the rules and knowledge layer is not just a quality control mechanism, it is a legal requirement. Any diagnostic support system must demonstrate that its recommendations align with established clinical guidelines, not just patterns learned from public data.

Implementation patterns: Three common approaches

From real-world deployments transitioning from proof-of-concept to production, three dominant Hybrid AI patterns have emerged:

- GenAI as the front-end, ML as the core decision engine

GenAI handles conversational interfaces, explanation, and natural language parsing, converting user input into structured data for specialized ML models to make decisions. This is ideal for credit scoring, operational risk assessment, and supply chain prioritization. - Enhanced RAG with knowledge layers

Beyond simple retrieval, ML ranks and filters documents, which are then enriched via knowledge graphs before GenAI generates responses. This pattern is effective for clinical decision support, legal research, and internal technical knowledge systems. - Post-processing validation via rule engines

GenAI generates outputs, but before reaching the user, they pass through strict validation layers: rule engines check compliance, knowledge graphs ensure consistency, and confidence thresholds determine whether to escalate to human review. This architecture is critical in zero-tolerance domains such as surgery, high-value financial approvals, and regulatory compliance.

Security is not an add-on – it is architectural

A commonly overlooked aspect of Hybrid AI is data security. While pure GenAI often requires sending data to external APIs, Hybrid AI enables a “model-to-data” approach rather than “data-to-model.” Sensitive data remains on-premise or within private VPC environments, while only anonymized, non-sensitive information is exposed to external models when necessary.

This is not merely a technical preference, it is a prerequisite for deploying AI in regulated industries such as finance and healthcare. Without such architecture, most high-value use cases involving sensitive data are practically infeasible.

From tactics to strategy

Hybrid AI is not limited to any single industry. At a macro level, 78% of organizations now use AI in at least one business function, up from 55% in 2023. However, the proportion of AI initiatives successfully scaled to production remains significantly lower than those in pilot stages. This gap is precisely where Hybrid AI creates value.

The real shift is not technological, it is conceptual. Enterprises are moving from asking “Which AI model is best?” to “Which architecture enables trustworthy decision-making in our specific context?” This is a systems design question, not a tool selection exercise.

Over the next 3-5 years, most enterprise AI applications will inherently adopt hybrid architectures, not because it is a trend, but because it is the only viable way to deliver reliable value in environments where the cost of error is high.

References

- Information Age – What is Hybrid AI?

- Earley – What is Hybrid AI Approach to Data Discovery

- The Hackett Group – Glossary: Hybrid AI

- Lenovo – Hybrid AI Glossary

- NTT Data – Not All Hallucinations Are Bad: Constraints and Benefits of Generative AI

- Zenodo – Hybrid AI in Healthcare Financial Security (2024)

- IJCAT – Hybrid AI for Cybersecurity and Fraud Detection

- DFKI – On Explanations for Hybrid Artificial Intelligence

- Tucuvi – Hybrid AI in Healthcare

- Ramp – What is Hybrid AI

- ICT Vietnam – Tại sao AI lai là xu hướng tiếp theo

- Aptech FPT – Hybrid AI: Cầu nối giữa lý thuyết và thực tiễn

- HSBC AI Case Study – Chief AI Officer Blog, 2025: HSBC phát hiện 2-4 lần nhiều giao dịch đáng ngờ hơn, giảm false positive 60% — https://chiefaiofficer.com/blog/how-hsbcs-ai-catches-4x-more-financial-criminals-while-cutting-false-alarms-by-60/

- JPMorgan Chase AI Case Study – AI Expert Network, 2025: Hệ thống fraud detection ngăn 1,5 tỷ USD tổn thất, độ chính xác 98%, AML false positive giảm 60% — https://aiexpert.network/ai-at-jpmorgan/

- Iodine AwarePre-Bill – Strativera, 2025: Giảm 63% thời gian xem xét claims, 2,394 tỷ USD reimbursement trên 1.000+ hệ thống y tế năm 2024 — https://strativera.com/ai-healthcare-business-transformation-frameworks-2025/

- Menlo Ventures – 2025: The State of AI in Healthcare: 22% tổ chức y tế đã triển khai AI chuyên biệt, tăng 7 lần so với 2024 — https://menlovc.com/perspective/2025-the-state-of-ai-in-healthcare/

- Fullview.io / AI Statistics 2025 – 78% tổ chức đã dùng AI trong ít nhất một chức năng kinh doanh năm 2024-2025 — https://www.fullview.io/blog/ai-statistics

| Exclusive article by Mr. Le Van Hoang Trung, Deputy Director, Infrastructure Services Center, FPT IS, FPT Corporation

Mr. Lê Văn Hoàng Trung is a seasoned expert with over 17 years of experience in enterprise IT solution consulting. He was recognized in the Top 100 FPT in 2016 and ranked Top 6 in the “Trạng FPT” talent competition in 2013 for his outstanding technical and consulting contributions. He has served as Lead Solution Consultant for numerous large-scale infrastructure projects valued between VND 50-200 billion, with deep expertise across key industries including manufacturing, retail, oil and gas, and transportation. His work focuses on helping enterprises design and optimize IT infrastructure, data centers, network systems, and digital platforms to support operations and accelerate digital transformation. |